AutoCAD, a leading computer-aided design (CAD) software, is an essential tool for architects, engineers, and designers worldwide. While professional courses are available, many aspiring users choose to learn AutoCAD on their own, thanks to abundant self-learning resources. If you’re motivated and ready to dive into the world of CAD, here’s a step-by-step guide to mastering AutoCAD independently.

1. Understand What AutoCAD Is

Before jumping in, it’s crucial to understand the basics:

- Purpose: AutoCAD is used for creating 2D drawings and 3D models.

- Applications: It’s commonly used in architecture, mechanical design, civil engineering, and interior design.

- Features: Familiarize yourself with drafting, dimensioning, and rendering capabilities.

2. Get the Right Software

Download AutoCAD:

Visit Autodesk’s official website to download a free trial version of AutoCAD. If you’re a student, Autodesk offers free access through its education program.

Check System Requirements:

Ensure your computer meets the hardware and software requirements to run AutoCAD smoothly.

3. Familiarize Yourself with the Interface

AutoCAD’s interface can seem complex at first, but breaking it down helps:

- Ribbon Toolbar: Contains tabs for drawing, modifying, and managing projects.

- Command Line: A key feature where you input commands like LINE, CIRCLE, and OFFSET.

- Drawing Area: The workspace where designs come to life.

- Navigation Tools: Includes zoom, pan, and view controls for navigating your drawing.

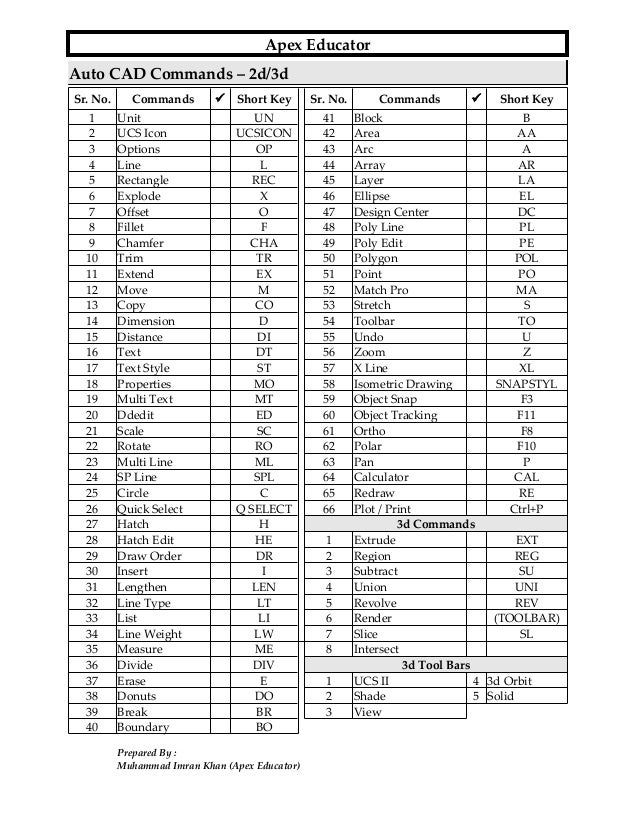

4. Start with Basic Commands

Learning AutoCAD starts with mastering its essential commands:

- Drawing Commands: LINE, CIRCLE, RECTANGLE, POLYGON.

- Editing Commands: TRIM, EXTEND, COPY, MOVE, ROTATE.

- Dimensioning Tools: DIMLINEAR, DIMANGULAR, and DIMRADIUS for adding measurements.

- Layer Management: Create and manage layers to organize your drawing.

Practice each command individually to understand its functionality.

6. Follow Structured Tutorials

Work through beginner tutorials that guide you through real-world projects. Websites like:

- myCADsite: Provides free lessons categorized by skill level.

- Lynda/LinkedIn Learning: Offers professional courses, often with free trials.

- Coursera and Udemy: Feature affordable AutoCAD courses with certificates.

7. Practice with Real-Life Projects

Hands-on practice is crucial to mastering AutoCAD. Start with simple projects:

- Create a floor plan for a room.

- Draft a simple mechanical part like a gear or bolt.

- Experiment with 3D objects like cubes or cylinders.

Gradually increase the complexity as you grow comfortable with the software.

8. Explore Advanced Features

Once you’re confident with the basics, delve into advanced functionalities:

- 3D Modeling: Learn commands like EXTRUDE, REVOLVE, and SWEEP to create 3D objects.

- Parametric Design: Use constraints to create intelligent designs that adjust dynamically.

- Rendering: Experiment with materials and lighting to bring 3D models to life.

9. Troubleshoot and Learn

Mistakes are part of the learning process. Use these strategies to troubleshoot:

- Refer to the command line for error messages.

- Search for solutions online or post queries in forums.

- Experiment with different tools to achieve desired results.

10. Build a Portfolio

As you practice, save your projects and organize them into a portfolio. This demonstrates your skills and can be useful when applying for jobs or freelance work.

11. Stay Updated

AutoCAD evolves with each release. Stay updated by:

- Following Autodesk’s official blog.

- Attending webinars and online events.

- Learning about new features introduced in updates.

12. Develop Discipline and Patience

Self-learning requires dedication. Set aside regular time to practice, be patient with the learning curve, and celebrate small milestones to stay motivated.

Conclusion

Learning AutoCAD on your own is entirely achievable with the right resources and consistent practice. By starting with the basics, leveraging online tutorials, and tackling real-life projects, you can become proficient in AutoCAD and unlock opportunities in architecture, engineering, and design.