AutoCAD is one of the most widely used computer-aided design (CAD) software tools in the world. Known for its precision, versatility, and compatibility across industries like architecture, engineering, and product design, it has set a high standard in digital drafting. However, it’s not the only option available.

Whether you’re looking for a more affordable alternative, specific features, or just exploring your options, there are several CAD tools that serve as strong alternatives to AutoCAD. Here’s a look at some of the most popular ones.

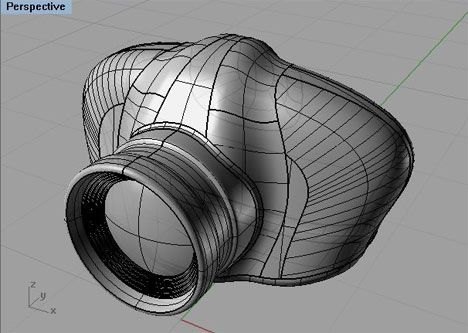

1. SolidWorks

Best for: 3D mechanical modeling and product design.

SolidWorks is a powerful 3D CAD software primarily used in mechanical engineering and product design. While AutoCAD focuses on both 2D and 3D design, SolidWorks specializes in parametric modeling, assemblies, and simulations.

Key features:

-

Advanced 3D modeling tools.

-

Simulation and analysis capabilities.

-

Engineering-driven design interface.

2. SketchUp

Best for: Architectural and interior design.

SketchUp is known for its user-friendly interface and is especially popular among architects, interior designers, and hobbyists. It’s ideal for quickly visualizing concepts in 3D.

Key features:

-

Easy to learn and use.

-

Large library of pre-built models.

-

Integration with VR and AR tools.

3. Revit

Best for: Building Information Modeling (BIM).

Developed by Autodesk (like AutoCAD), Revit is tailored for BIM and is widely used in the architecture, engineering, and construction (AEC) industries. It allows collaboration among architects, engineers, and contractors in a shared model environment.

Key features:

-

Intelligent 3D model-based design.

-

Automated building documentation.

-

Strong collaboration tools.

4. BricsCAD

Best for: Cost-effective AutoCAD alternative.

BricsCAD offers a similar interface and command structure to AutoCAD, making it easy to transition for experienced users. It supports both 2D drafting and 3D modeling.

Key features:

-

Native DWG file support.

-

Familiar user interface.

-

AI-assisted features like Blockify.

5. LibreCAD

Best for: Open-source 2D drafting.

LibreCAD is a free and open-source CAD application suitable for simple 2D drafting. It’s a great choice for students, educators, and small businesses looking for a budget-friendly option.

Key features:

-

Lightweight and fast.

-

Customizable UI.

-

Supports DXF files.

6. Fusion 360

Best for: Product development and industrial design.

Another tool by Autodesk, Fusion 360 is an integrated cloud-based CAD/CAM/CAE platform. It’s used widely in mechanical and industrial design and supports collaboration in real-time.

Key features:

-

Parametric and freeform modeling.

-

Simulation and manufacturing tools.

-

Cloud collaboration.

7. TinkerCAD

Best for: Beginners and education.

TinkerCAD is a simple, web-based CAD tool perfect for beginners, hobbyists, and students. It’s commonly used for 3D printing projects and basic modeling tasks.

Key features:

-

User-friendly drag-and-drop interface.

-

Ideal for kids and STEM learning.

-

Free to use.

Final Thoughts

AutoCAD may be the industry leader, but many alternatives cater to specific needs—whether it’s 3D mechanical design, architectural modeling, open-source development, or cost-effective drafting. The best software for you depends on your goals, budget, and skill level.

Before choosing, ask yourself:

-

Do I need 2D drafting or 3D modeling?

-

Is collaboration important?

-

What’s my budget?

-

Do I need specialized tools (e.g., BIM, CAM, simulation)?

Whatever your requirements, the CAD world is full of powerful tools beyond AutoCAD—and exploring them can open new possibilities in your design journey.